Welcome to the session 5 of the Beam Learning Months!

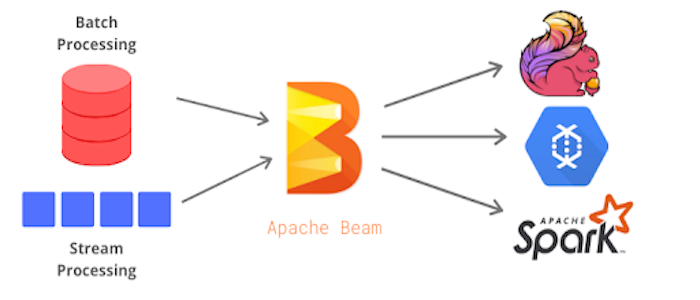

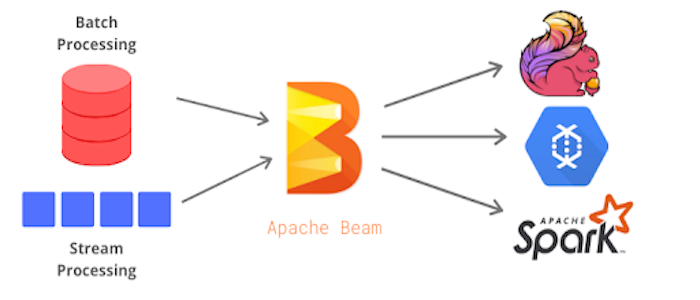

Apache Beam is an open source, unified model for defining both batch and streaming data-parallel processing pipelines. Using one of the open source Beam SDKs, you build a program that defines the pipeline. The pipeline is then executed by one of Beam’s supported distributed processing back-ends, which include Apache Apex, Apache Flink, Apache Spark, and Google Cloud Dataflow.

In this session we will learn how eBay builds feature pipelines with Apache Beam.

To unify feature extraction and selection in online and offline, to speedup E2E iteration for model training, evaluation and serving, to support different types (streaming, runtime, batch) of features, etc. eBay leverages Apache Beam for their streaming feature SDK as a foundation to integrate with Kafka, Hadoop, Flink, Airflow and others in eBay.

Dont forget to sign up to the other sessions on Beam learning months:

May 6 - An interactive introduction to Apache Beam using Jupyter Notebooks: watch recording, and see the example notebook with dependencies configured in colab

May 13 - Best practices towards a production-ready pipeline by Pablo Estrada, Apache Beam PMC member

May 20 - Introduction to the Spark Runner by Ismaël Mejía, Apache Beam & Apache Avro PMC member

May 27 - Introduction to the Flink Runner by by Max Michels, Apache Beam and Apache Flink PMC member

I’m a technology evangelist for big data open source in eBay, from distributed crawl system, behavior data sessionization and bot detection with map reduce to spark as analyst development optimize platform for ETL, from storm lambda based application to beam event time based streaming system. I’m also hands on web dev from mvc to event sourcing, domain driven in my earlier career. I believe creative ideas come out from diversity, discussion and colorful life.